While the use of goldfish loss and similar techniques can mitigate some risks and demonstrate a commitment to responsible AI development, they are not a substitute for obtaining proper permissions...

...and licenses. AI makers should adopt a holistic approach that combines technical, legal and ethical strategies to protect themselves from legal actions and ensure the fair use of copyrighted works.

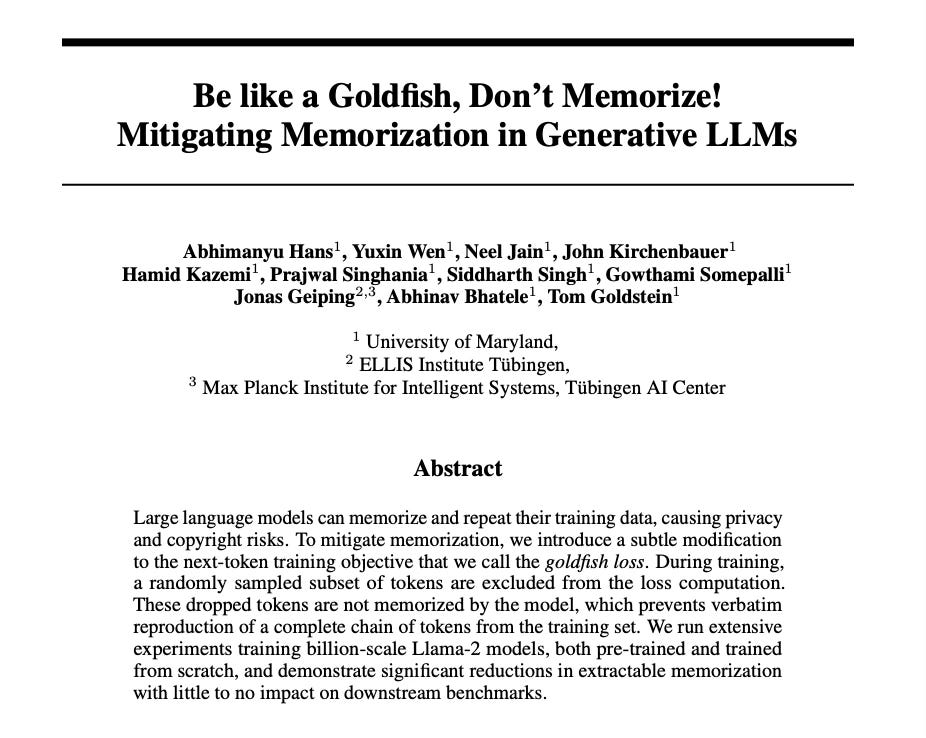

Question 1 of 5 for ChatGPT-4o: Please read the paper "Be like a Goldfish, Don’t Memorize! Mitigating Memorization in Generative LLMs" and tell me what it says in easy to understand language and what the strengths and weaknesses of the paper are.

ChatGPT-4o’s answer:

Summary of the Paper

The paper "Be like a Goldfish, Don’t Memorize! Mitigating Memorization in Generative LLMs" proposes a method called "goldfish loss" to mitigate memorization in large language models (LLMs). Here's a simplified explanation and the strengths and weaknesses of the paper:

Main Idea

Memorization Issue: Large language models can memorize and reproduce parts of their training data verbatim, which poses privacy and copyright risks.

Goldfish Loss: The goldfish loss technique involves modifying the training process by excluding a randomly sampled subset of tokens from the loss computation during training. This prevents the model from memorizing and reproducing exact sequences from the training data.

Method

During training, a portion of the tokens are randomly excluded from the loss calculation.

This ensures that the model learns to generalize rather than memorize specific sequences.

At inference time, when the model encounters these excluded tokens, it has to guess, which reduces the likelihood of reproducing exact sequences from the training data.

Experiments

The authors conducted extensive experiments with LLaMA-2 models, both pre-trained and trained from scratch.

Results showed significant reductions in extractable memorization with minimal impact on downstream benchmarks.

Strengths

Simplicity: The goldfish loss method is simple to implement and doesn't require complex modifications to existing training processes.

Effectiveness: The experiments demonstrate a significant reduction in memorization, making it harder for models to reproduce exact training sequences.

Minimal Performance Impact: The method has little to no impact on the model's performance on standard benchmarks, maintaining the utility of the LLMs.

Scalability: It can be applied to different sizes of models and various training setups, making it versatile for different use cases.

Practical Relevance: Addresses real-world concerns about privacy and copyright, making it valuable for industrial applications where data sensitivity is a concern.

Weaknesses

Incomplete Protection: The method reduces but does not eliminate the risk of memorization. In adversarial scenarios, some memorization can still occur.

Training Overhead: The need to drop tokens means the model might require more training data or longer training times to achieve the same level of performance as standard models.

Adversarial Limitations: While the method resists standard extraction methods, it may still be vulnerable to more sophisticated adversarial attacks.

Dependence on Masking Strategy: The effectiveness of the goldfish loss depends on the choice of the masking strategy. Poor choices could reduce its effectiveness or impact model performance.

Scalability to Larger Models: The paper raises questions about how well the benefits of goldfish loss scale to much larger models with tens or hundreds of billions of parameters, which requires further investigation.

Overall, the goldfish loss presents a promising approach to mitigating memorization in LLMs, balancing the need to protect sensitive information while maintaining model performance. However, it is not a complete solution and should be used alongside other privacy-preserving techniques.

Question 2 of 5 for ChatGPT-4o: What are the most remarkable and controversial findings and statements in the paper?

ChatGPT-4o’s answer:

Remarkable and Controversial Findings and Statements

Remarkable Findings:

Significant Reduction in Memorization: The goldfish loss technique significantly reduces the ability of models to memorize and regenerate verbatim sequences from the training data. This is a notable finding because it directly addresses the privacy and copyright risks associated with LLMs.

Minimal Impact on Performance: Despite reducing memorization, the models trained with goldfish loss maintain their performance on various downstream benchmarks, indicating that the technique doesn't compromise the overall utility of the model.

Resilience in Extreme Scenarios: The technique is effective even in extreme training scenarios designed to promote memorization. For instance, training a model on a small number of Wikipedia articles for many epochs shows that goldfish loss can prevent verbatim memorization in such aggressive conditions.

Adversarial Extraction Resistance: The paper demonstrates that models trained with goldfish loss are more resistant to adversarial extraction methods, such as membership inference attacks and adaptive beam search attacks, although not entirely immune.

Controversial Statements and Findings:

No Absolute Guarantees: The authors acknowledge that the goldfish loss comes with no absolute guarantees against data extraction. This is a crucial admission that, while the technique reduces risks, it does not eliminate them entirely. This might be seen as controversial since it suggests that even with this mitigation, LLMs might still pose privacy and copyright risks.

Comparison with Differential Privacy: The paper suggests that goldfish loss has potential advantages over differential privacy, particularly in scenarios where documents appear multiple times in the training data. This comparison might be controversial because differential privacy is a well-established, theoretically grounded method, whereas goldfish loss is presented as a more empirical, less formally guaranteed approach.

Scalability Concerns: The authors raise concerns about how well the benefits of goldfish loss will scale to models with tens or hundreds of billions of parameters. This is significant because it points to potential limitations in the applicability of the technique to the largest and most powerful LLMs.

Potential for Misinterpretation: The authors caution against mistaking the goldfish loss for a foolproof solution ("Don’t Mistake Fish Oil for Snake Oil"). This statement might be seen as controversial because it highlights the limitations and potential over-optimism about the efficacy of the proposed method.

These points highlight the balance the paper strikes between proposing a novel, effective mitigation technique and acknowledging its limitations and the need for further research and complementary methods.

Question 3 of 5 for ChatGPT-4o: Do you have suggestions for improvements or future research?

ChatGPT-4o’s answer:

Suggestions for Improvements

Enhanced Masking Strategies:

Dynamic Masking: Investigate dynamic masking strategies that adaptively determine which tokens to exclude based on context and importance, rather than a fixed percentage or random selection.

Context-Aware Masking: Develop context-aware masking techniques that consider semantic relevance and importance of tokens, ensuring critical information is preserved while minimizing memorization.

Combination with Other Techniques:

Hybrid Approaches: Combine goldfish loss with other privacy-preserving techniques such as differential privacy, regularization methods, or model editing to provide multi-layered protection against memorization.

Data Sanitization: Incorporate data sanitization techniques to pre-process training data, removing or anonymizing sensitive information before training.

Scalability and Efficiency:

Scalability Studies: Conduct extensive scalability studies to understand how goldfish loss performs with larger models and more complex datasets. Optimize the technique for efficiency in terms of computation and training time.

Resource Optimization: Develop methods to reduce the overhead introduced by goldfish loss, such as more efficient token selection or parallel processing techniques.

Robust Evaluation:

Broader Benchmarking: Expand the range of benchmarks and real-world scenarios used to evaluate the effectiveness of goldfish loss, including diverse languages, domains, and applications.

Longitudinal Studies: Perform longitudinal studies to assess the long-term impacts of goldfish loss on model performance and memorization as the model is exposed to more data and retraining cycles.

Suggestions for Future Research

Adversarial Robustness:

Advanced Adversarial Attacks: Explore more sophisticated adversarial attack methods to test the robustness of goldfish loss, identifying potential weaknesses and areas for improvement.

Defense Mechanisms: Research new defense mechanisms that can work in tandem with goldfish loss to provide comprehensive protection against various types of data extraction attacks.

Theoretical Foundations:

Formal Guarantees: Develop theoretical frameworks to provide formal guarantees and bounds on the effectiveness of goldfish loss, similar to those provided by differential privacy.

Mathematical Models: Create mathematical models to better understand the dynamics of token dropping and its impact on memorization and learning.

User-Defined Privacy Levels:

Customizable Privacy Settings: Investigate methods to allow users to define their desired level of privacy protection, dynamically adjusting the goldfish loss parameters to meet specific needs.

Granular Control: Develop techniques to provide granular control over which types of data are more heavily protected, based on sensitivity and user preferences.

Application-Specific Adaptations:

Domain-Specific Models: Adapt goldfish loss for domain-specific models, such as medical, legal, or financial domains, where privacy concerns are particularly acute.

Fine-Tuning Phases: Explore the application of goldfish loss during fine-tuning phases for specialized tasks, ensuring privacy without compromising task-specific performance.

Interdisciplinary Approaches:

Collaboration with Legal Experts: Collaborate with legal experts to understand and address the regulatory implications of using goldfish loss, ensuring compliance with data protection laws.

Ethical Considerations: Work with ethicists to explore the ethical implications of using LLMs with reduced memorization capabilities, balancing privacy with the need for accountability and transparency.

By addressing these areas, future research can build on the promising results of goldfish loss, enhancing its effectiveness and applicability in real-world scenarios while ensuring robust privacy protections.

Question 4 of 5 for ChatGPT-4o: How can AI makers and content or rights owners make use of this information?

ChatGPT-4o’s answer:

How AI Makers Can Use This Information

Implementation of Goldfish Loss:

Incorporate into Training Pipelines: AI makers can integrate goldfish loss into their training pipelines to mitigate the risk of memorization. This can be particularly useful for companies developing large language models for commercial applications.

Customizable Privacy Levels: Provide customizable privacy settings where users or clients can choose the level of memorization mitigation based on their specific needs and regulatory requirements.

Product Development and Differentiation:

Privacy-Focused Products: Develop and market products that emphasize privacy and data protection, highlighting the use of goldfish loss as a key feature. This can attract privacy-conscious customers and differentiate products in the market.

Compliance and Certification: Work towards certifications and compliance with privacy regulations by demonstrating the effectiveness of goldfish loss in protecting user data.

Enhanced Security Protocols:

Multi-Layered Defense: Combine goldfish loss with other privacy-preserving techniques (e.g., differential privacy, data sanitization) to create a robust multi-layered defense against data extraction.

Regular Audits: Conduct regular audits and tests using adversarial attacks to ensure the models remain resistant to data extraction over time.

Transparency and Communication:

Public Communication: Clearly communicate the steps taken to mitigate memorization and protect data privacy, including the implementation of goldfish loss, to build trust with users and stakeholders.

User Education: Educate users and clients about the benefits and limitations of memorization mitigation techniques, helping them make informed decisions about data usage and protection.

How Content or Rights Owners Can Use This Information

Data Sharing Agreements:

Negotiate Terms: When sharing data with AI makers, content or rights owners can negotiate terms that require the use of memorization mitigation techniques like goldfish loss to protect their content.

Audit Rights: Include clauses that allow for regular audits and transparency reports to ensure compliance with data protection measures.

Protection of Intellectual Property:

Prevent Unauthorized Use: Use memorization mitigation techniques to prevent the unauthorized use and reproduction of copyrighted material by ensuring AI models cannot memorize and reproduce verbatim content.

Legal Safeguards: Leverage these techniques as part of a broader strategy to comply with intellectual property laws and reduce the risk of legal issues related to AI-generated content.

Collaboration with AI Makers:

Joint Development: Collaborate with AI developers to create domain-specific models that incorporate goldfish loss, ensuring that sensitive or proprietary content is protected during model training and deployment.

Feedback Loop: Establish a feedback loop where content owners can report instances of memorized content being generated by AI models, allowing for continuous improvement of memorization mitigation techniques.

Public Advocacy and Policy Influence:

Advocate for Standards: Advocate for industry standards and best practices that include the use of techniques like goldfish loss to protect content and user data.

Influence Policy: Work with policymakers to create regulations that require AI developers to implement effective memorization mitigation strategies, ensuring widespread adoption and compliance.

Mutual Benefits

Building Trust and Collaboration:

Shared Goals: Both AI makers and content or rights owners share a common goal of protecting data and intellectual property. Collaborative efforts can lead to better solutions and trust between the parties.

Innovation and Improvement: Continuous collaboration can drive innovation in privacy-preserving techniques, leading to more effective methods for mitigating memorization and protecting sensitive information.

Consumer Confidence:

Enhanced Privacy: By implementing robust memorization mitigation strategies, both parties can enhance consumer confidence in AI technologies, ensuring that users feel their data and content are secure.

Market Advantage: Companies that prioritize and effectively communicate their commitment to privacy and intellectual property protection can gain a competitive advantage in the marketplace.

By leveraging the insights from the paper on goldfish loss, AI makers and content or rights owners can better protect sensitive information, comply with regulatory requirements, and build trust with users and stakeholders.

Question 5 of 5 for ChatGPT-4o: Will the use of this methodology in any way protect AI makers against legal actions from rights owners, when the AI model has been trained on copyrighted works without permission or compensation?

ChatGPT-4o’s answer:

The use of goldfish loss or similar memorization mitigation techniques can offer some degree of protection to AI makers against legal actions from rights owners, but it is not a foolproof solution. Here's an in-depth look at the implications and limitations:

Potential Protections

Reduced Risk of Verbatim Reproduction:

Decreased Likelihood of Copyright Infringement: By reducing the ability of the model to reproduce verbatim text from training data, goldfish loss can decrease the risk of generating content that could be deemed as infringing on copyrighted works.

Demonstration of Due Diligence: Implementing goldfish loss shows that AI makers are taking proactive steps to prevent the misuse of copyrighted content, which could be viewed favorably in legal contexts as a form of due diligence.

Compliance with Privacy Standards:

Adherence to Best Practices: Using such techniques may help AI makers align with industry best practices for data privacy and protection, which could be relevant in legal defenses and regulatory compliance.

Minimized Impact on Copyrighted Content:

Less Direct Use: By ensuring that the model doesn't memorize and reproduce specific sequences, the impact on copyrighted content is minimized, potentially reducing the scope of legal issues related to direct content replication.

Limitations and Remaining Risks

No Absolute Legal Protection:

Legal Uncertainty: The use of memorization mitigation techniques does not provide absolute legal protection against copyright infringement claims. Copyright law is complex, and the use of copyrighted works without permission or compensation can still lead to legal actions, regardless of the mitigation strategies in place.

Copyright Law Variations: Different jurisdictions have varying interpretations and implementations of copyright law, and what might be acceptable in one region may not be in another.

Adversarial and Sophisticated Attacks:

Vulnerabilities: Despite reducing the risk of verbatim reproduction, sophisticated adversarial attacks could still potentially extract copyrighted content from the models, leaving AI makers vulnerable to legal challenges.

Licensing and Permissions:

Need for Clear Permissions: The safest legal approach remains obtaining explicit permissions and licenses for using copyrighted content. Relying solely on technical mitigations like goldfish loss does not replace the need for proper licensing agreements.

Compensation and Fair Use: Issues related to fair use, compensation, and the ethical use of copyrighted material remain pertinent and must be addressed through legal and contractual means, not just technical solutions.

Recommendations for AI Makers

Obtain Licenses and Permissions:

Legal Agreements: Secure proper licenses and permissions for any copyrighted material used in training datasets to avoid legal issues.

Compensation Models: Develop and implement compensation models for rights owners to ensure fair use of their content.

Implement Comprehensive Compliance Strategies:

Combine Approaches: Use goldfish loss in conjunction with other privacy-preserving and compliance techniques, such as differential privacy and data sanitization.

Regular Audits: Conduct regular audits to ensure models do not inadvertently reproduce copyrighted content.

Transparency and Communication:

Clear Policies: Develop clear policies and documentation demonstrating the steps taken to mitigate memorization and protect copyrighted content.

Stakeholder Engagement: Engage with rights owners and other stakeholders to build trust and collaborative frameworks for the use of their content.

Legal and Ethical Guidance:

Consult Legal Experts: Regularly consult with legal experts to stay updated on copyright laws and ensure compliance.

Ethical Considerations: Consider the ethical implications of using copyrighted material and strive for practices that respect the rights and contributions of content creators.

Conclusion

While the use of goldfish loss and similar techniques can mitigate some risks and demonstrate a commitment to responsible AI development, they are not a substitute for obtaining proper permissions and licenses. AI makers should adopt a holistic approach that combines technical, legal, and ethical strategies to protect themselves from legal actions and ensure the fair use of copyrighted works.